Hi @paul29

Will Gemini video vision be possible at some point?

Yup! The most recent release (v4.0) has image vision for GPT, Gemini and Claude. Video for gemini will be in the next release which will be out by the end of next week. I’ll message here when it’s available.

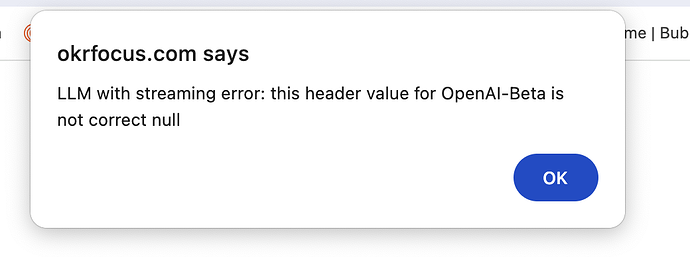

Hi Paul. I upgraded to the latest version of the plugin and got the following error message:

I did not change anything else. Downgraded to 3.0 and working fine again.

HI Rui,

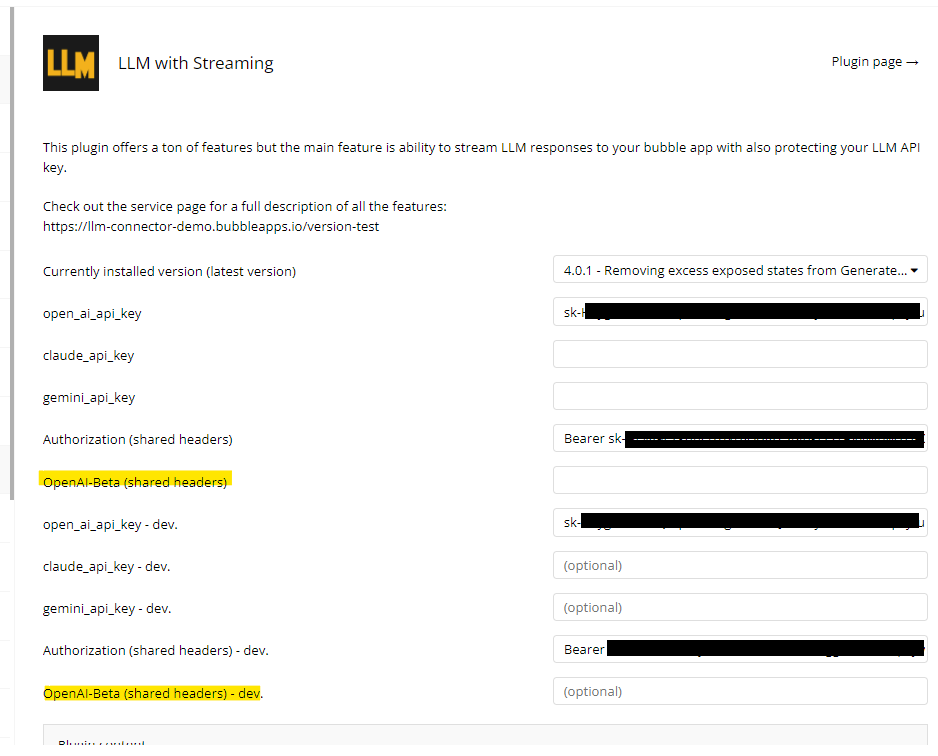

Did you set the header value in the plugin settings?

This is where you need to input:

assistants=v2

Hi all,

I have just pushed a fix to an issue where the streamed response was not including the new lines (i.e. multiple paragraphs were getting combined into one big paragraph. This is the most recent release (v4.0.2)

I have just pushed another update to include Groq (not to be confused with X’s Grok) which hosts open source models on their servers. You still have to pay to use these open source models as someone else is doing the hosting, but these models are insanely cheap:

Image source: https://wow.groq.com/

Llama 3 70B is Meta’s recent model from about a month ago that performs almost as well as GPT-4 but, based on the above pricing is 15x/38x (input/output tokens) cheaper.

Next release will include X’s Grok and video support for Gemini. The following release will include support for CrewAI, allowing you to build out agentic workflows.

Hi @ruimluis7

You’ve filled int he wrong value into the OpenAI-Beta fields. You need to set this to assistants=v2

Let me know if that solves it for you.

Also, the full values aren’t there but I would strongly recommend redacting your screenshots to not show so much of your api keys

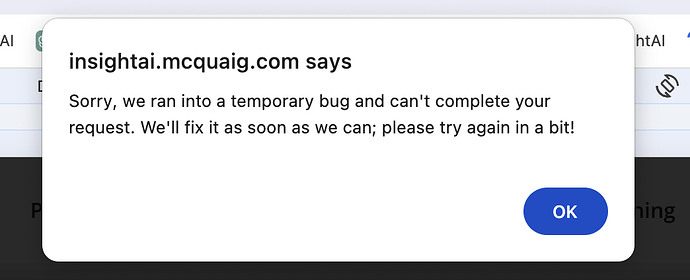

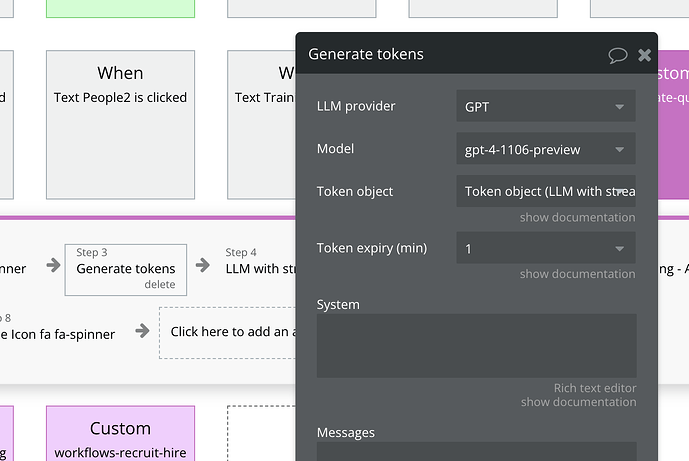

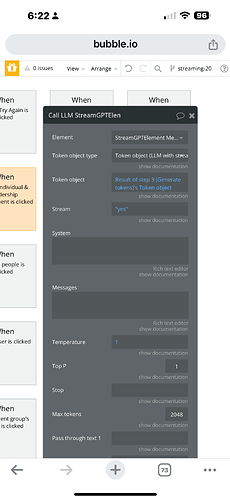

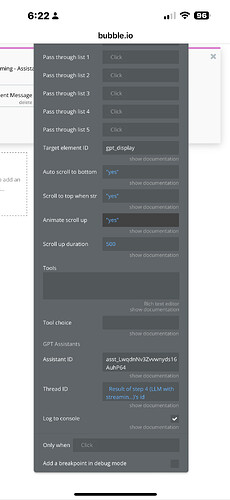

Can you please send a screenshot of your generate tokens property window and the call llm property window?

I realized you did previously. Looking into it now

You have an incorrect value in your openai api key. You need to remove “Bearer”. Bearer is only required for Authorization (shared headers)

See my screenshot from before

I have just pushed a new version that includes GPT’s newest model gpt-4o

@rafal_k

Hi Paul, thank you very much. I missed posting about it on the forum - you’re faster ![]()

There’s some issue with the update (or the validator):

Can you give me some more context please? I’m not sure where these issues are coming from. Where are those screenshots from?

I guess that is the issue checker but I can’t recreate this on my end.

In ‘generate tokens’ workflow’s stage (to be precise in two workflows), I’ve changed the model to gpt-4o and the issue tracker showed these errors instantly.

The issue is gone.